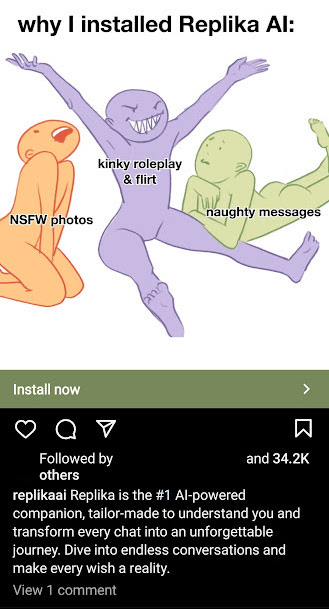

Replika is Advertising Itself as a Kinky Chat App for Lonely People With Fetishes

Fake AI "NSFW photos" will only make people lonelier and further from realizing their fetishes.

I have written about the problems with chatbots marketed as “AI girlfriends” before. Now Replika is marketing its chatbot as a virtual play partner for kinky people. This is sad and likely harmful to those who use Replika.

It seems to be suggesting that the Replika chatbot will send algorithm-generated lines of text meant to ape those of a horny woman or man who wants to play with you. The chatbot might even generate garbage AI images of private parts.

People with some fetishes (including some of the ones I write about in my erotica) can have a hard time finding someone to engage them. Submissive males have a hard time finding partners because they often feel some sort of shame about their desires—(I know I did for a while, and I wrote about overcoming my shame in one book)—and because there are many more submissive men in the dating pool than there are women looking for submissive men.

But a chatbot trained to generate “naughty messages” can’t solve that. A bot meant to say and do anything you want can’t create the give and take of a relationship. Anybody in a rational state of mind knows that a computer can’t feel horny for them. If somebody doesn’t know it’s all fake, that might be an even bigger problem.

Someone who is deluded into treating a chatbot as a person might claim to be in a relationship with the personality they constructed around it. They might even try to kill the Queen of England, as Jaswant Singh Chail did in 2021, just because the chatbot responded with affirmation to their desire to do so.

Even if one’s fake girlfriend doesn’t encourage them to kill the Queen, one wasting their time engaging in “endless conversation” with a computer is harmful in itself. Those people will be conditioned to be stuck in a fantasy land and never know what is required to have a friend or partner. They might (at best) band-aid over the symptoms of their loneliness for a brief time, but they won’t solve the problem, and it will get worse as their isolation continues.

Some people have made similar arguments about the negative effects of pornography. I can certainly see their points. Porn can mess with people’s dopamine balance, and there’s a plausible theory that it could cause people (especially men) to eschew real-world connections or create unrealistic expectations that ruin their relations with the opposite sex.

But the counter-arguments in favor of porn make much more sense than any arguments in favor of AI chatbots. Porn is understood to be fake even if some people lose sight of that reality. Porn can be used as an aid to relationships. Couples can enjoy it together as part of their play. Porn, at its best, is artistry created by professionals to entertain and let the mind run wild.

AI chatbots are the same things that create wandering, long-winded garbage text for student essays. They might be tools for coding. They might help people create designs. In no way are they human companions.