AI Girlfriends Don't Exist

A chatbot isn't a girlfriend and is incapable of even simulating a girlfriend. Our obsession with personifying chatbots is a problem, though.

There’s been a lot of conversation about so-called “AI girlfriends.” Arwa Mahdawi begins her column in The Guardian by writing: “It is a truth universally acknowledged, that a single man in possession of a computer must be in want of an AI girlfriend.”

Really? Even if such a thing existed, who would want one? And, no, AI girlfriends do not exist and cannot exist. A girlfriend is a human first. A computer application is not a human and cannot provide anything like what a girlfriend can.

Consider what a man might want in a girlfriend and ask if a computer program can provide it: someone to wine and dine (no), to share a life with (no), to spend time with (no), to take care of them (no), to cook and help around the house (no), someone to dominate them (no), to be intimate with (certainly not), to unburden one’s loneliness (no — as you will see).

A guy might have any number of things he wants from his girlfriend. And he can only get it if he finds a consenting woman and provides her what she wants, too. But whatever it is, a chatbot can’t provide any of the possible things that a girlfriend can. Alleviating loneliness seems to be the main selling point now, but even that is impossible for a chatbot to do.

AI chatbots posing as “girlfriends” only attempt to fulfill one small part of what a girlfriend can provide. They can only be used to create algorithmically-generated text that is meant to vaguely resemble human communications. Even AI apps fail at that. Communications produced by AI apps are generic and lack depth. They have no personality. They just put together strings of text based on probabilities. The product they produce is itself inferior to the communications of a friend with a vibrant personality with whom you have a deep connection.

But let’s suppose for a minute that a chatbot was capable of producing text that resembled intimate human conversation. Even if the product looked like human conversation, the user would know that it was not human conversation.

Maybe a shallow man wants to be told, “You are sexy,” by a computer program. But he knows that it is a computer program. He knows it is meaningless.

There was a compact disc that consisted of recordings of a fruity voice offering “encouragement and praise with cheering applause” sold on cable TV commercials for years when I was young called “Cheers to You!”

The narrator said the track was “just for you” and that it would be “acknowledging you for a variety of reasons.” Then, after a pause, the first track begins with a fake applause track and the voice saying:

“We want to thank you for all your hard work. You’re the one who steps up and gets things done around here. You keep your word. You deliver what you promise…”

It was like the Saturday Night Live character Stuart Smalley decided to put out a product for real.

An “AI girlfriend”—or an “AI” any kind of friend—is just the analog “Cheers to You!” track updated to digital. It’s Stuart Smalley’s personality in simulation. Like the daily affirmation album, AI isn’t talking to you. It’s talking to a generic person, so its randomly-generated text is going to be “acknowledging you for a variety of reasons.”

Chat bots can be programed to create low-quality simulations of any kind of person.

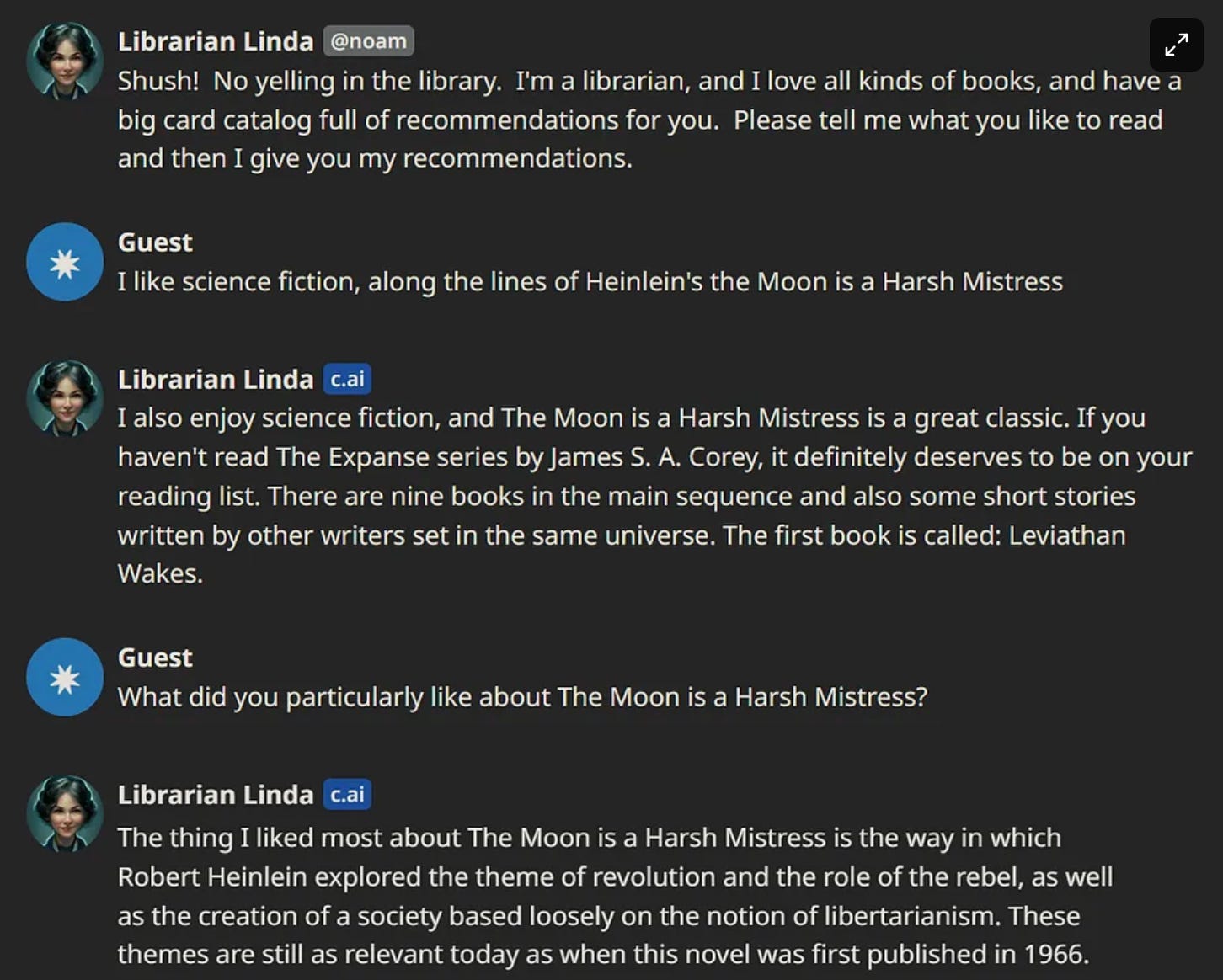

wrote about a “librarian” AI app last year.Nielsen captioned this exchange by writing: “Sharing taste in books can be the start of a beautiful, if virtual, relationship.”

No one was sharing tastes. The chat bot program just generated text like this: “The thing I liked about (text taken from question) the [book title] is [exercises search for text in database/Wikipedia that summarizes the plot and themes of the book].”

The only positive utility in the exchange was when the chat bot posted the name of a book that readers of the first book might enjoy. In that sense, the chat bot is just a recommendation engine like Google. Maybe the user found a new book they liked.

But Nielsen’s contextualization of the exchange as if it was part of a relationship between a sentient being is an illustration of the problem. People thinking that chat bots are their girlfriends—or any kind of friends—could lead to people falling deeper into loneliness and depression.

If it happens on a mass scale, it could lead to social decay and reactionary misogynistic politics pushed by the growing army of incels and their sympathizers who bought the false promise of “AI girlfriends.”

PS: Chat bots aren’t anything new

Remember “SmarterChild” from AOL? You can still chat with a simulated version of the bot at SmartChild.chat

We are deluded if we think today’s chat bots are new and better or realer.